4 Minuten

The eponymous article by Langdon Winner from 1983 describes the "deceptive quality of technical objects and processes – […] the fact that they can be 'used' in this way or in that – blinds us to the ways in which they structure what we are able to do“. (Winner 1983).

This also applies to technology in the audio sector. The normative power of popular technologies and their media reinforcement (e.g. with video tutorials in social media) is increasingly structuring the artistic results, with a vanishing point in cliché. The canonization of processes and tools (think of resonance models or granular synthesis) creates the illusion of a deceptive comfort that automatically entails a loss of individuality and thus aesthetic constriction.

It is obvious that with all-round production environments such as Ableton Live, an increase in efficiency can be achieved in terms of the amount of music generated per time spent composing, if you want to use this as a benchmark. The wealth of available tools and presets leaves hardly anything to be desired, the software systems are stable and not very stubborn. In addition to elaborately designed effects and "instruments" (also in terms of the interface), AI algorithms are increasingly being used that have been trained using data from other artists in order to reproduce a certain way of working or musical style.

Due to the complexity of these technologies, it is typically impossible for users to understand how they work in detail or to influence them in depth (i.e. beyond a predefined user interface). In AI systems based on learned data, acoustic-musical parameters are projected into "latent spaces" that have a high degree of abstraction and have lost direct identification with physical processes. We are effectively dealing with black-box systems in varying degrees of blackness, which can be approached in an exploratory, searching manner rather than with a claim to analytical understanding and control.

The working method shifts from a structure determined by fundamental synthesis algorithms or small-scale work with sound snippets à la Musique Concrète to a conducting of curated instruments on a meta-level. Against this background, the idiosyncratic software systems of Herbert Brün, Gottfried Michael Koenig, or Günther Rabl appear to be fossils that are dug up from time to time, but tend to be viewed from a safe distance.

I think that's a pity. The relatively distanced work with sound using pre-defined or externally defined systems very often lacks the specific, edgy, dirty, human element in its results. The resurgence of modular synthesizers (especially the Eurorack format) can probably be traced back to the desire for individualization and hands-on music-making.

I would like to use the possibilities of the sounding future platform in the future to regularly reflect on aspects of the relationship between people and sound machines, sometimes from a more philosophical-theoretical side, sometimes from a practical-craft side, following the imperative of Lewis et al (2018):

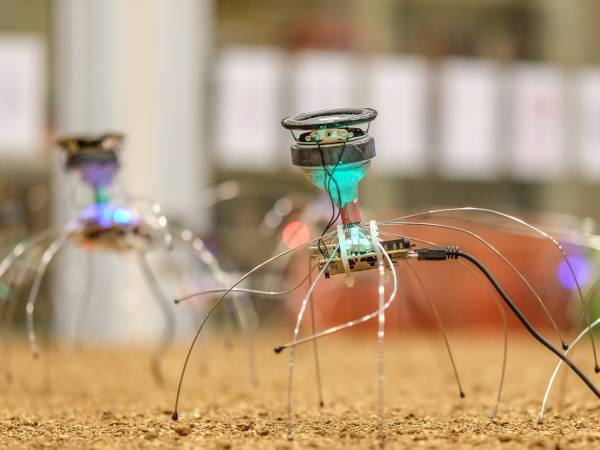

As we manufacture more machines with increasing levels of sentient-like behaviour, we must consider how such entities fit within the kin-network. (Jason Edward Lewis)

This is also the guiding principle of the artistic research project Spirits in Complexity – Making kin with experimental music systems, which will start in April 2024 with funding from the Austrian Science Fund (FWF) and, as a cooperation between the University of Music and Performing Arts Vienna (mdw) and the Johannes Kepler University Linz (JKU), will deal with partnership relationships between humans (human) and music technology (non-human) and the associated strategies and dynamics. The methodical approaches through artistic experimental systems will cover the spectrum from instrumental technologies to electroacoustics and installation art to generative AI systems.

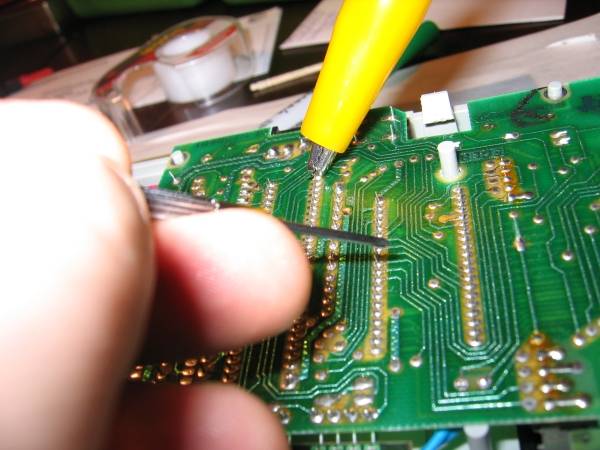

On the other hand, a cooperation project between the University of Applied Arts, the mdw, and the Phonogrammarchiv of the Austrian Academy of Sciences has been running since mid-2023 under the name Applied/Experimental Sound Research Lab (AESRL). The Sound Projection Lab sub-project supervised by the mdw is explicitly concerned with individualization, low-threshold, and DIY/maker culture. Embedded in university research and teaching, we are developing a flexible, modular, and mobile sound projection system that will also be available for loan for (artistic) research purposes. 16 modules with 16.1 loudspeaker channels each will be available in a basic configuration, whereby all aspects of loudspeaker and amplifier construction, system, and sound projection software will be developed in-house and documented in a comprehensible (open source) manner. The aim is to provide an easily accessible, reproducible, and affordable platform for experimental work with sound in an environment.

It seems essential to me not to withdraw to passive use when dealing with complex technologies, but to define for ourselves how we deal with these systems and actively and critically shape our lives with them – discursively instead of dominating them. According to Grüny (2022), this applies in particular to the increasingly present AI systems:

Instead of the greatest possible control, it is now a matter of allowing oneself to be deliberately distracted by the resistance and objections of uncontrollable instances in order to produce results that one could not have produced oneself and that hopefully go beyond what one's own abilities can achieve instead of falling behind them. (Christian Grüny, translated by the author)

Thomas Grill

Thomas Grill works as an artistic and scientific researcher on sound and its context. As a composer and performer, he focuses on concept-oriented sound art, electro-instrumental improvisation and compositions for loudspeakers. He researches and teaches at the University of Music and Performing Arts in Vienna, where he directs the course for Electroacoustic and Experimental Music (ELAK) and is deputy director of the Artistic Research Center (ARC).

Article topics

Article translations are machine translated and proofread.

Artikel von Thomas Grill

Thomas Grill

Thomas Grill