6 Minuten

The "Proyecto Carrusel" was born in Argentina during the isolation of COVID-19 as an artistic work. It was supported by the FNA (National Fund for the Arts) and the "Cultura Inmersiva" award of the Cultural Institute of the Province of Buenos Aires, continuing its development independently. Idea and direction: Esteban Gonzalez, programming: Federico Marino.

At the same time, I decided as director of the project to deepen the development of the programming of the virtual space as an open platform for other artists to present their works virtually. In other words, to create an open-source immersive virtual auditorium.

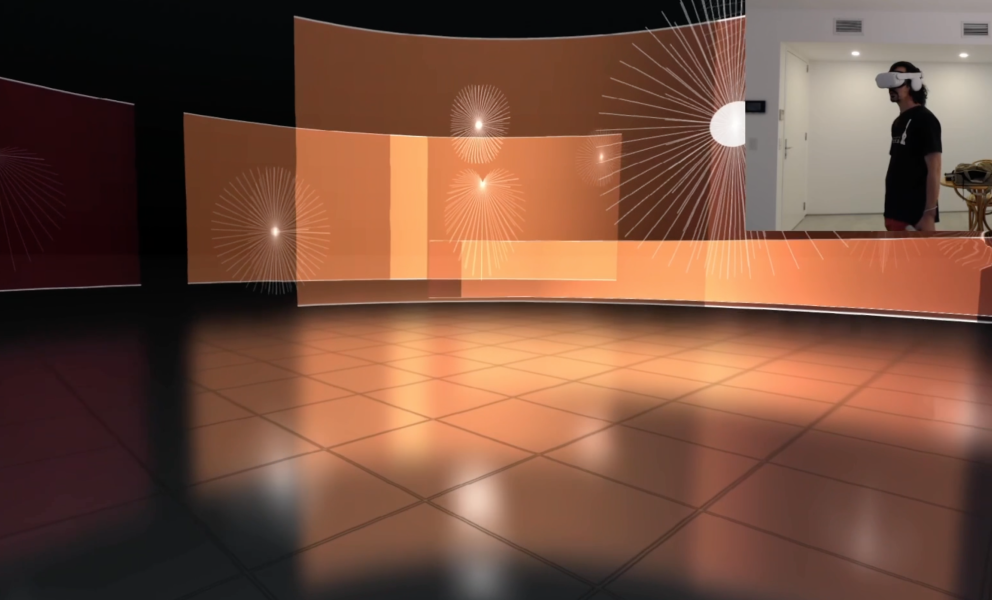

Currently the immersive experience of the homonymous work "Carrusel" can be realized. The application works as an immersive virtual auditorium and the latest version (3.0) is fully functional for VR, compatible with Meta Quest. We are developing by the end of 2024 a set of tools that will allow the positioning of moving sound sources and a comprehensive design of the visual scene without requiring programming skills. This work was just the beginning of the project and is just a small sample of the possibilities of expansion of the app in terms of free access platforms for immersive art working within a browser.

Concept

The virtual and the real raise the existence of the limit of the tangible, of what our body can feel in the act of touching while giving rise to new possibilities combined in the domain of the code and the pseudo cyborg perception of the device as an extension of the body and mind. A window through which we look at portions of the world we know at the same time that we give reality to parallel universes, emerging spaces of the human act in the dimension of data.

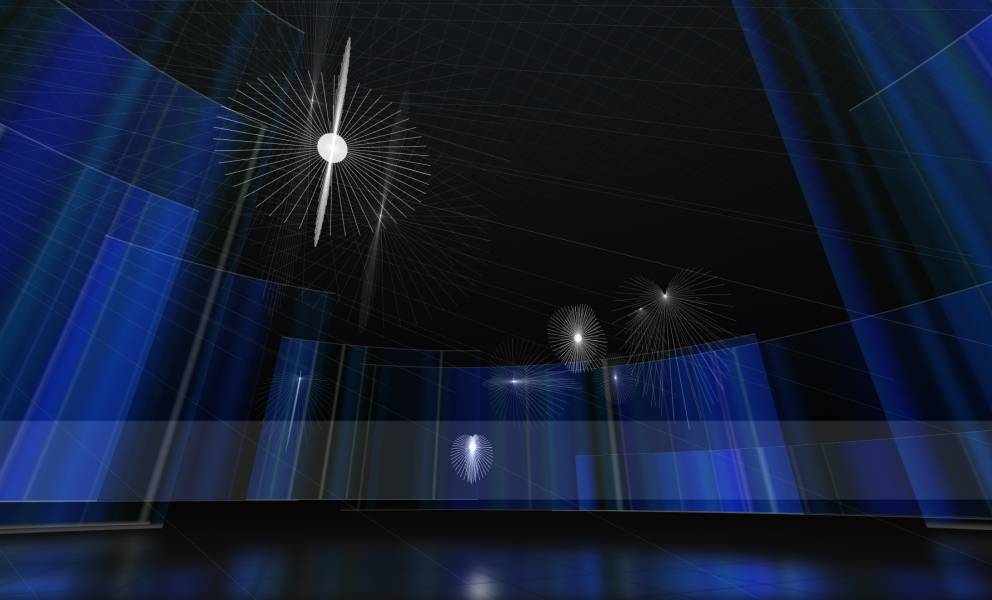

It is in this context that the work "Carrusel" generates in real time a multiplatform immersive visual and sound landscape that allows the viewer/user to be part of the composition and at the same time become a participant and collaborator in the creation of this virtual territory and the elements that compose it. The location of his body, the orientation of his head or his approaches, and distances to certain points of the visual space determine the actions of the application in real-time, reconfiguring the visual and sound scene.

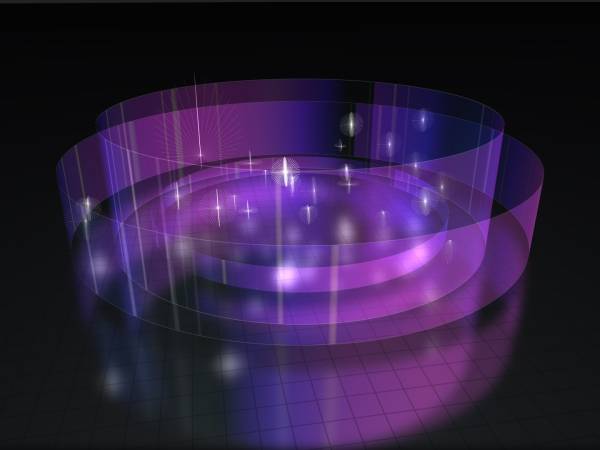

"Carrusel" is presented as a large dark room of diffuse horizons and dreamlike sounds that constantly feedback each other and possess their orbits of movement and transformation. A gallery of circular screens designed by visual artist Fernando Molina as transparent audio-reactive and generative walls that display color and abstractions accompany the scene from the visual.

A quantum concept of the "tunnel effect" that bends reality and allows the user to pass through the walls, pushing the limits of the natural world at the behest of the senses, giving rise to new realities.

Challenges and scopes

I will briefly recount some of the challenges in the course of our research and development in immersive audio by code.

The application is based on Three.js, JavaScript, and HTML, among others. Being developed on web technology, multiple versions for different devices (iOS, Android, Windows, Mac, etc.) are unnecessary.

One of the hurdles to overcome was the immersive audio mix for use in a browser. First of all, it was essential to analyze and break down the sound composition into a binaural format to define the displacements of the sound sources in space. The classification of the main audio sources and their spatial components (reverberation, echoes, and room personality). Once this 3D map, the location of sources, their displacements, and spatial trajectories were created, we proceeded to the mixing. Here a problem arises between the binaural space and the code-controlled audio.

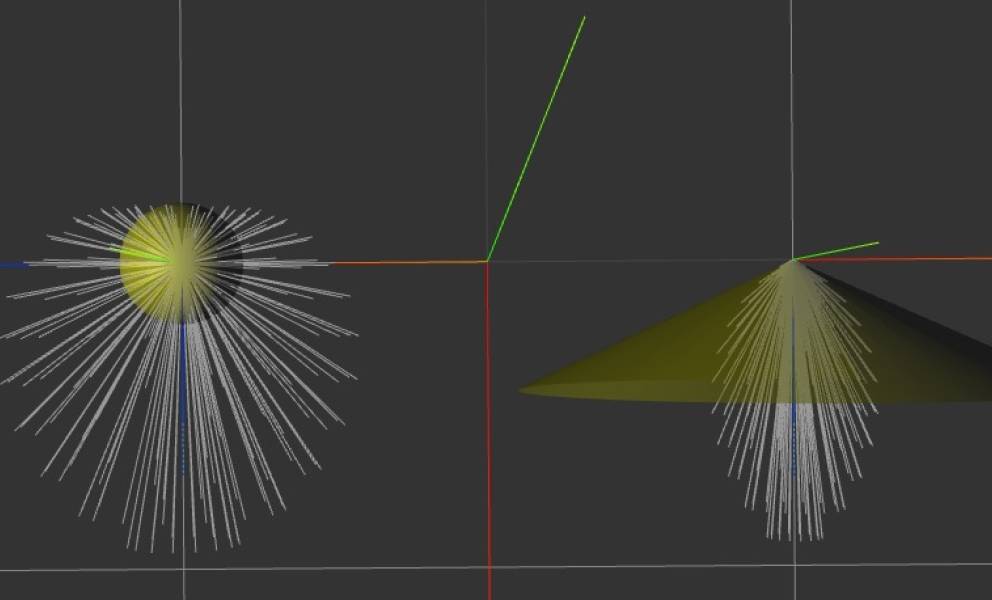

Discarding the elements of the room, a sound source of higher power refers us to a closer source and vice versa, in 2D and binaural space we raise its amplitude to low, but if that source is in an immersive 3D space it is essential to take into account its sound radiation patterns, its maximum and minimum amplitudes within these patterns, and its location in relation to the user. Controlling the amplitude value of the source, the decay of its radiation pattern, or modifying the pattern itself can be elements to manipulate in certain areas of the virtual space the amplitude of these sources, as well as the height parameter allows us a slight adjustment of this amplitude, moving the source closer or farther away. This also required us to retake the composition and think about how this music is heard from different angles, and in some cases even modify their cutoff frequencies, filter, and texture morphological characteristics (e.g., sounds with greater granularity allow better spatial location than others).

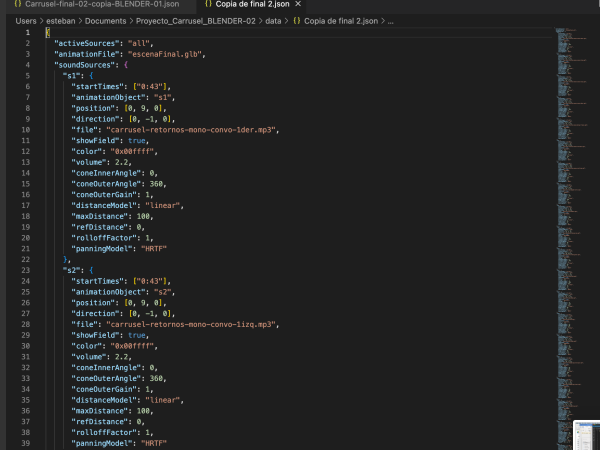

Music composition, file size, and bandwidth limits. When I composed the first version of the binaural "Carrusel", the work ended up as a stereo .wav file almost 8 minutes long. In the first attempts at "Carrusel" within the application I had about 22-bit Encode .mp3 (CBR 320) mono audio files. In the last one about 30-bit Encode .mp3 (CBR 320) files. This increased the total data volume of the project and the memory usage of the browser. One way to optimize the data volume was to cut the audio files into multiple segments, removing silent periods, which forced us to make the programming more complex by setting timers to trigger each segment at the precise moment of the composition.

During the research and development of the project, we detected a flaw in the Panner node of the WebAudio API, which we reported to Google Chrome and was accepted. The flaw is noticeable mainly at frequencies below 300Hz and is a clipping related to the HRTF. We were recently informed that the WebAudio API bug was being addressed for new Google Chrome updates. More details at Webaudio panner crackling sound when position is changed

What's next

At "Proyecto Carrusel" we are currently programming a set of didactic examples and tools for non-programmer artists to work with our platform and create their immersive works.

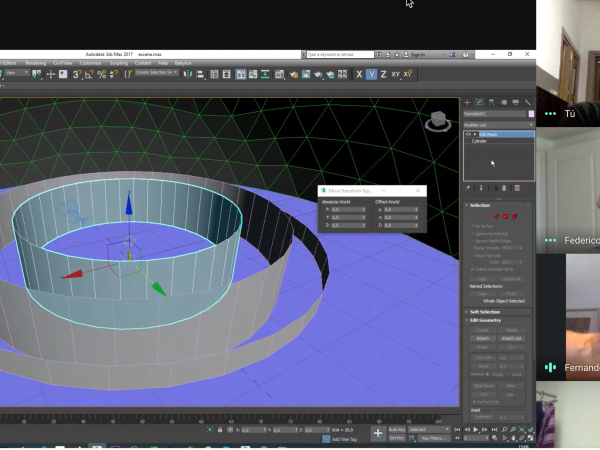

We are also developing a new internal application that allows us to design both the 3D visual scene and the sound scene in external programs such as 3DS Max or Blender, including locations, displacements, and complex orbits of sound sources and visual objects. The idea is to be able to define sound source trajectories based on keyframes or animation tracks, instead of setting predefined movement types programmatically. For that, we are working on being able to lift from a GLTF file the animation tracks of a 3D object and transfer its keyframes to the sound sources.

In summary, the sound scene is defined in a soundscene.json file (it has a list of sources with their sound characteristics, associated MP3 file, and audio stream trigger times) and an animation. The GLTF file contains the animated 3D objects that represent the movement of each source.

We believe that this new development will expand the accessibility of our application.

While our team is currently working on a pro-bono basis, we are seeking financial support to enable us to move forward with these new developments, and to expand our team of collaborators and artists.

We estimate that by the end of 2024, we will be able to finish the new developments of the platform. Then we will open a call for submissions of immersive audiovisual works for browser and we will release the code so that artists and programmers who love immersive can use it and expand their creative horizons.

Federico Marino - Programming Computer engineer, programmer, and visual artist from Buenos Aires. Freelance software developer and teacher at the Faculty of Engineering U.B.A. He specializes in the development of 3D visualization software based on WebGL and Three.js and VR applications. The Virtual Reality work "Lumicles" together with Esteban Gonzalez was presented at the CCK. He experiments with new ways of combining technology and generative art and image creation through programming code. Instagram - Web

Esteban Gonzalez and Federico Marino wrote this article for Sounding Future.

Explore the project: https://proyectocarrusel.com.ar

Esteban Gonzalez

Argentine multifaceted integral artist, researcher, performer, and luthier. He works with technology applied to art and music in several projects combining luthiery, experimentation, and sound art. He has taught in projects for UNESCO, Música Expandida UNSAM, UNA (Universidad Nacional de las Artes), Consejo Federal de la Danza, CASO (Centro de Arte Sonoro), etc. His work has been presented in Argentina, Brazil, Germany, the USA, Norway, Paraguay, Spain, Italy, and Japan.

Article translations are machine translated and proofread.

Artikel von Esteban Gonzalez

Esteban Gonzalez

Esteban Gonzalez