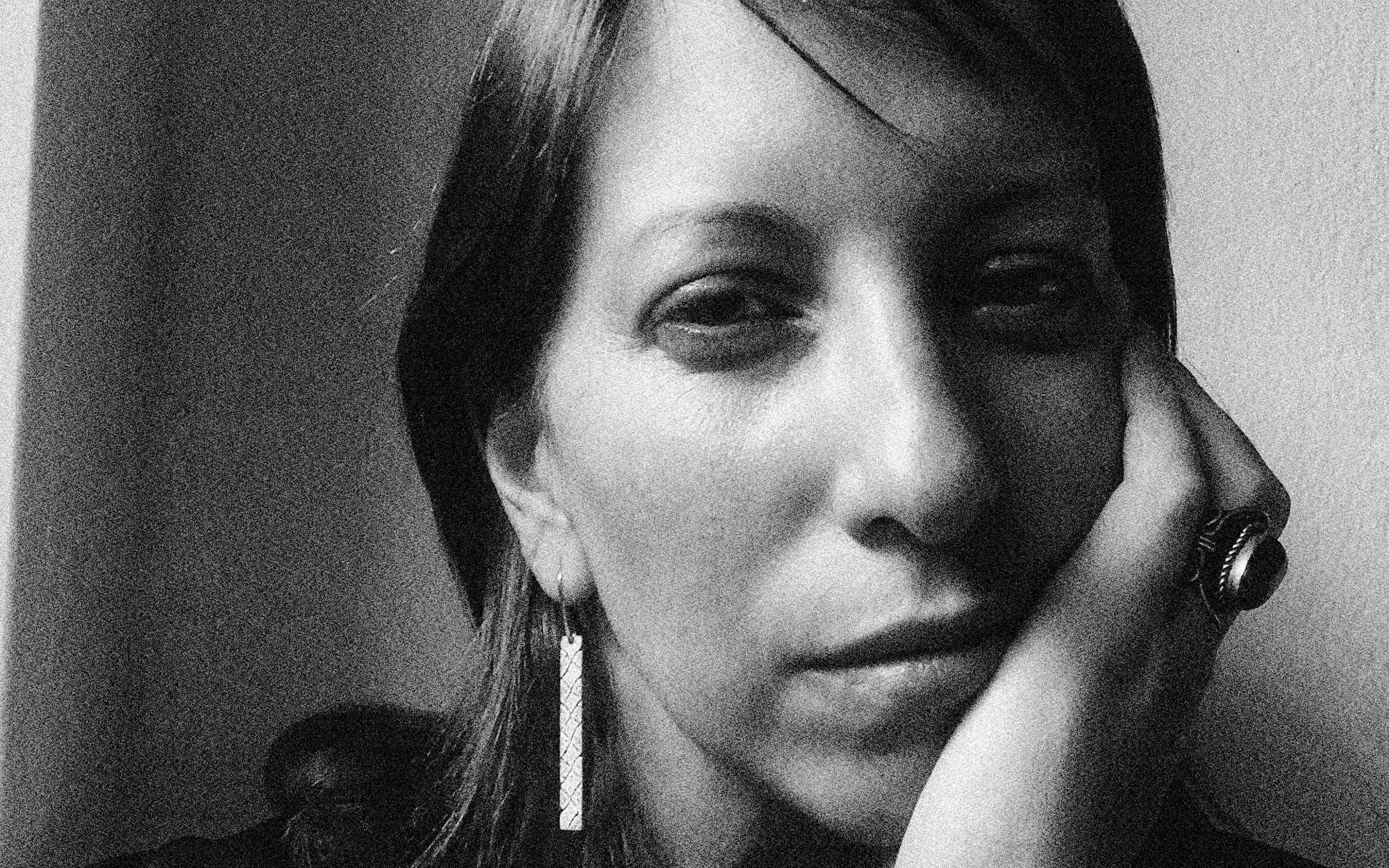

Sinan Bökesoy

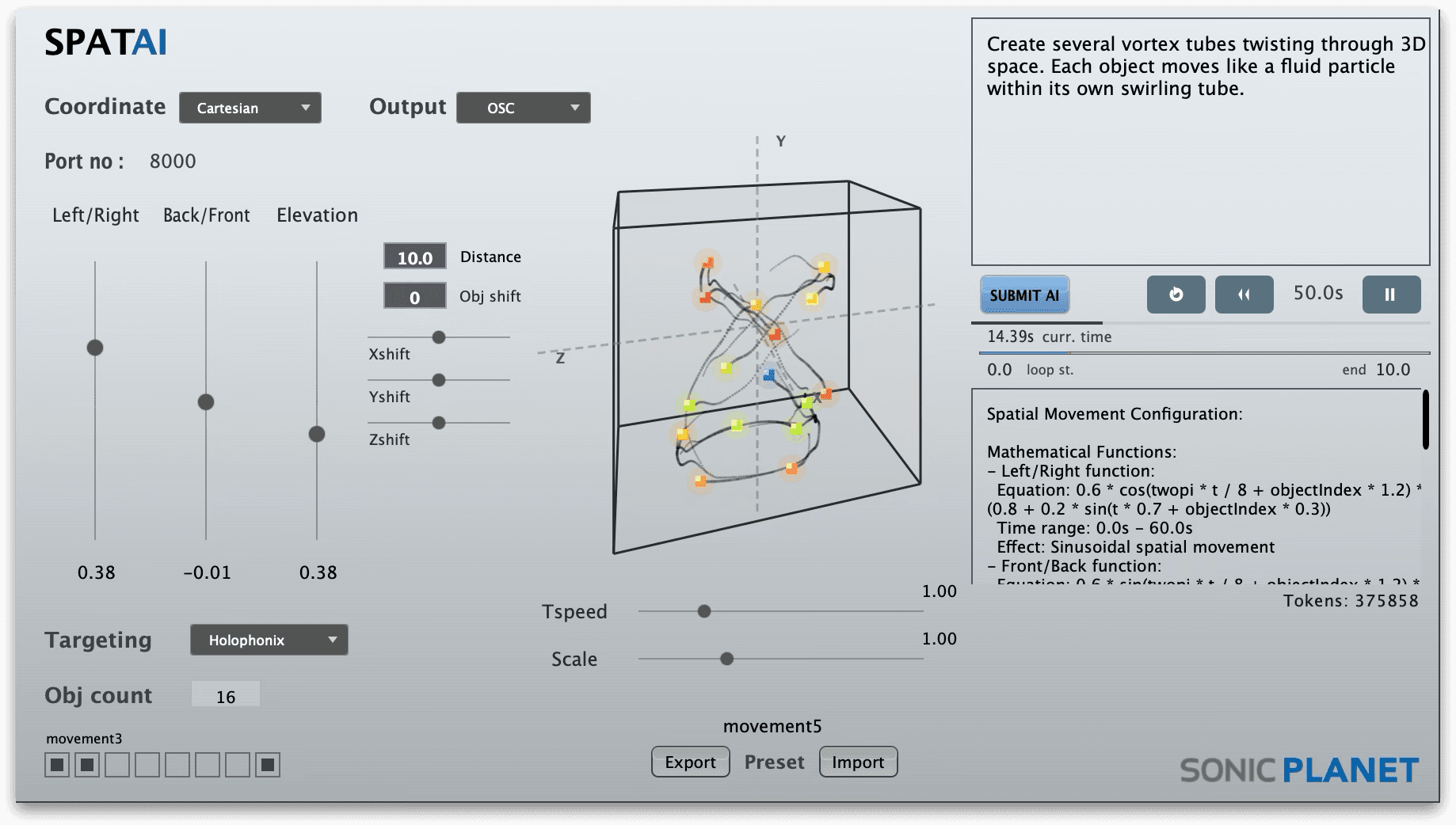

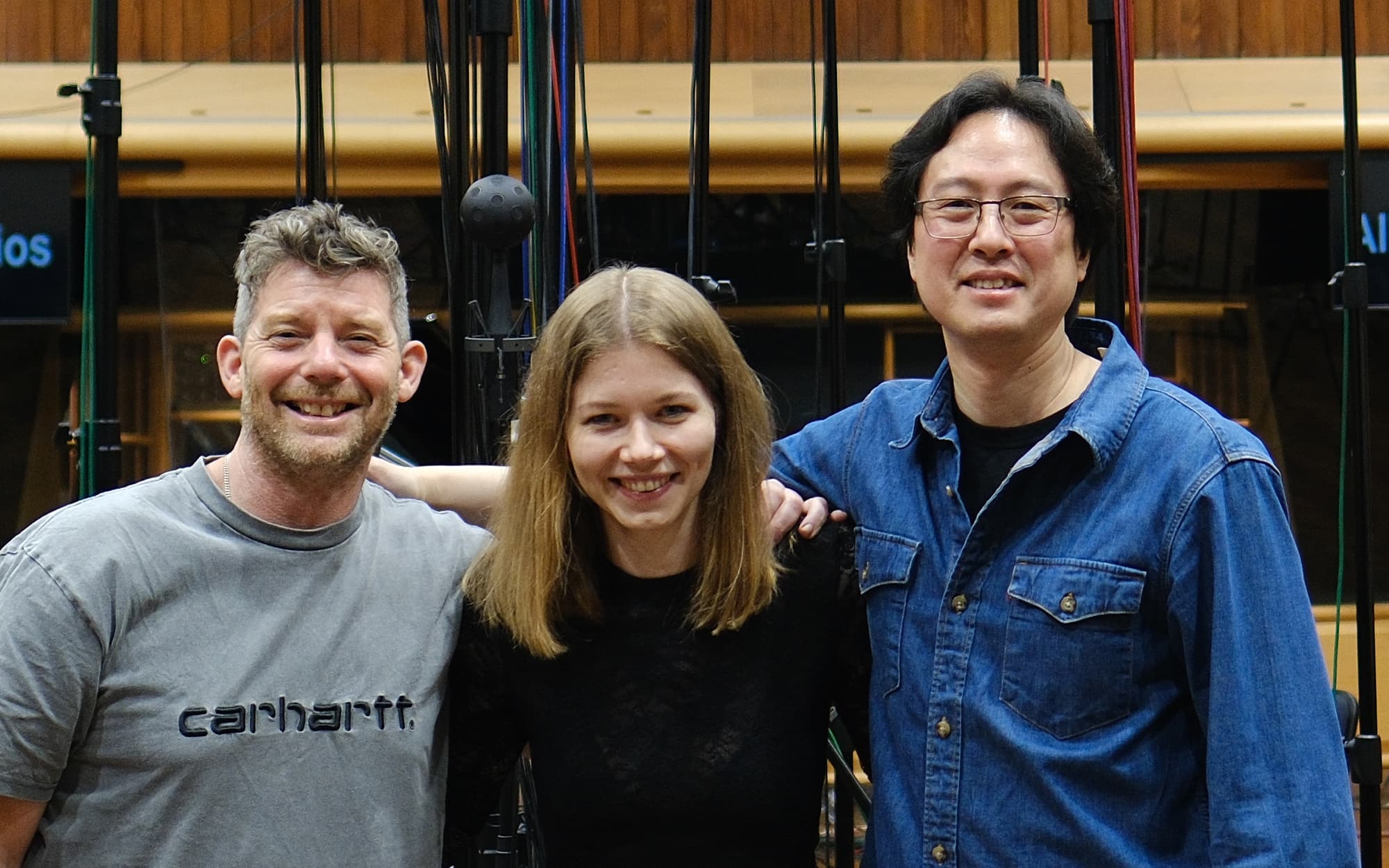

Sinan Bökesoy is a composer, sound artist and principal developer of sonicLAB and sonicPlanet. Holding a Ph.D. in computer music from the University of Paris8, under the direction of Horacio Vaggione, Bökesoy has carved a niche for himself in the synthesis of self-evolving sonic structures. Inspired by composer greats such as Xenakis, his work leverages algorithmic approaches, mathematical models, and physical processes to generate innovative audio synthesis results. Bökesoy strives to establish a balanced workflow that bridges theory and practice, as well as artistic and scientific approaches. Bökesoy has presented his work at prestigious venues such as Ars Electronica / Starts Prize, IRCAM, Radio France Festival, and international biennials and art galleries. He has published and presented multiple times at conferences including ICMC, NIME, JIM, and SMC, as well as in the Computer Music Journal, MIT Press.