Umanesimo Artificiale

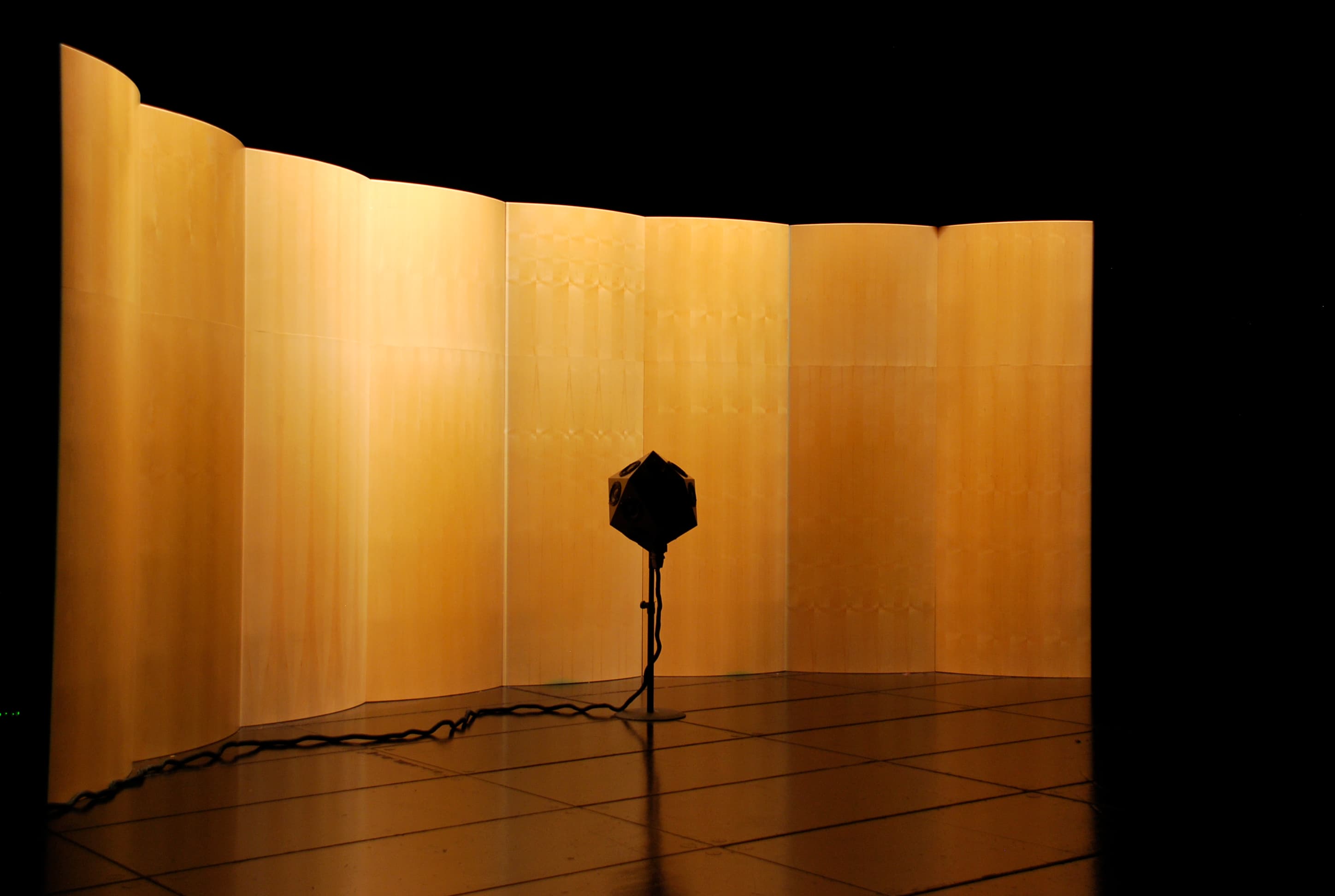

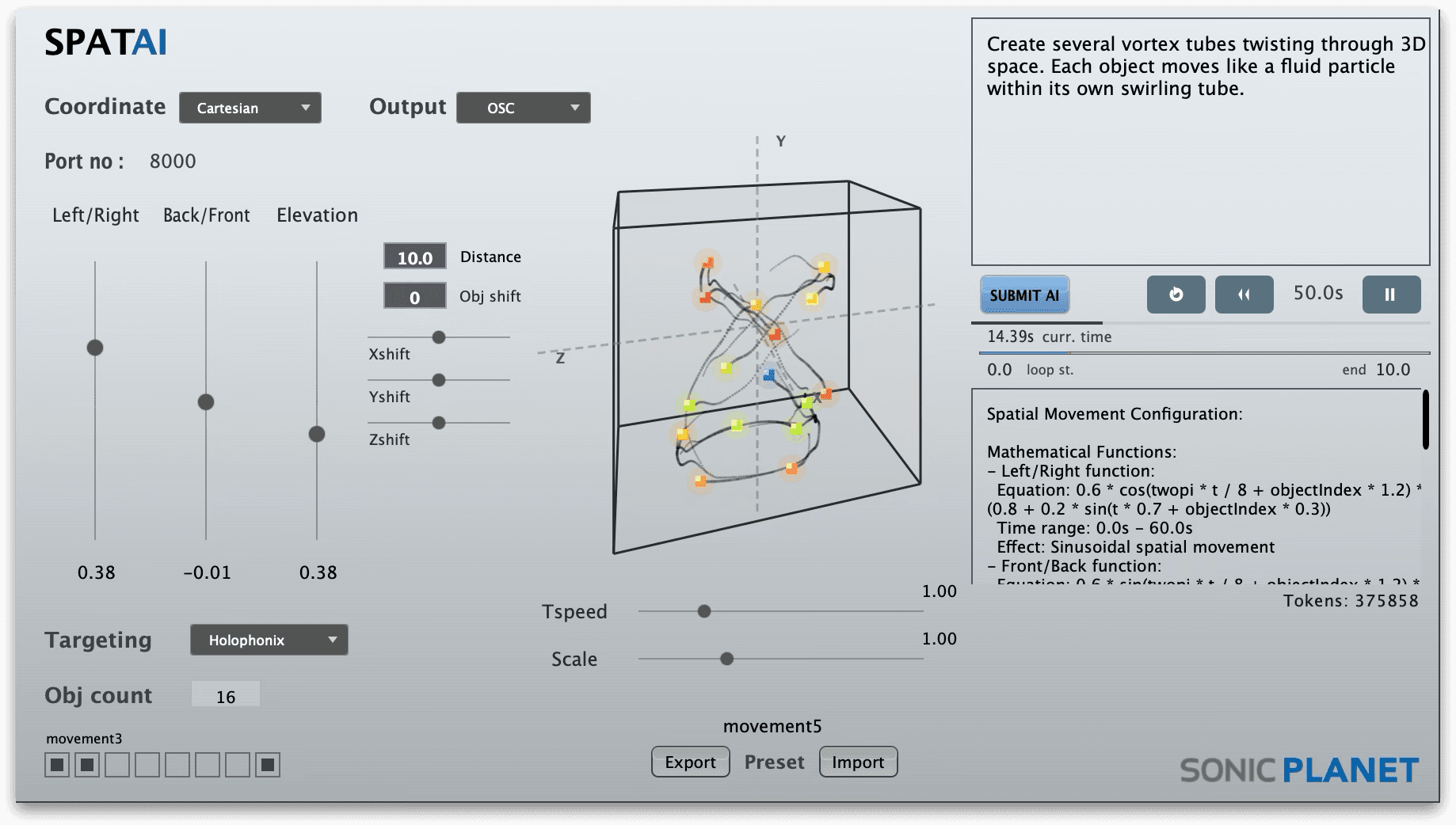

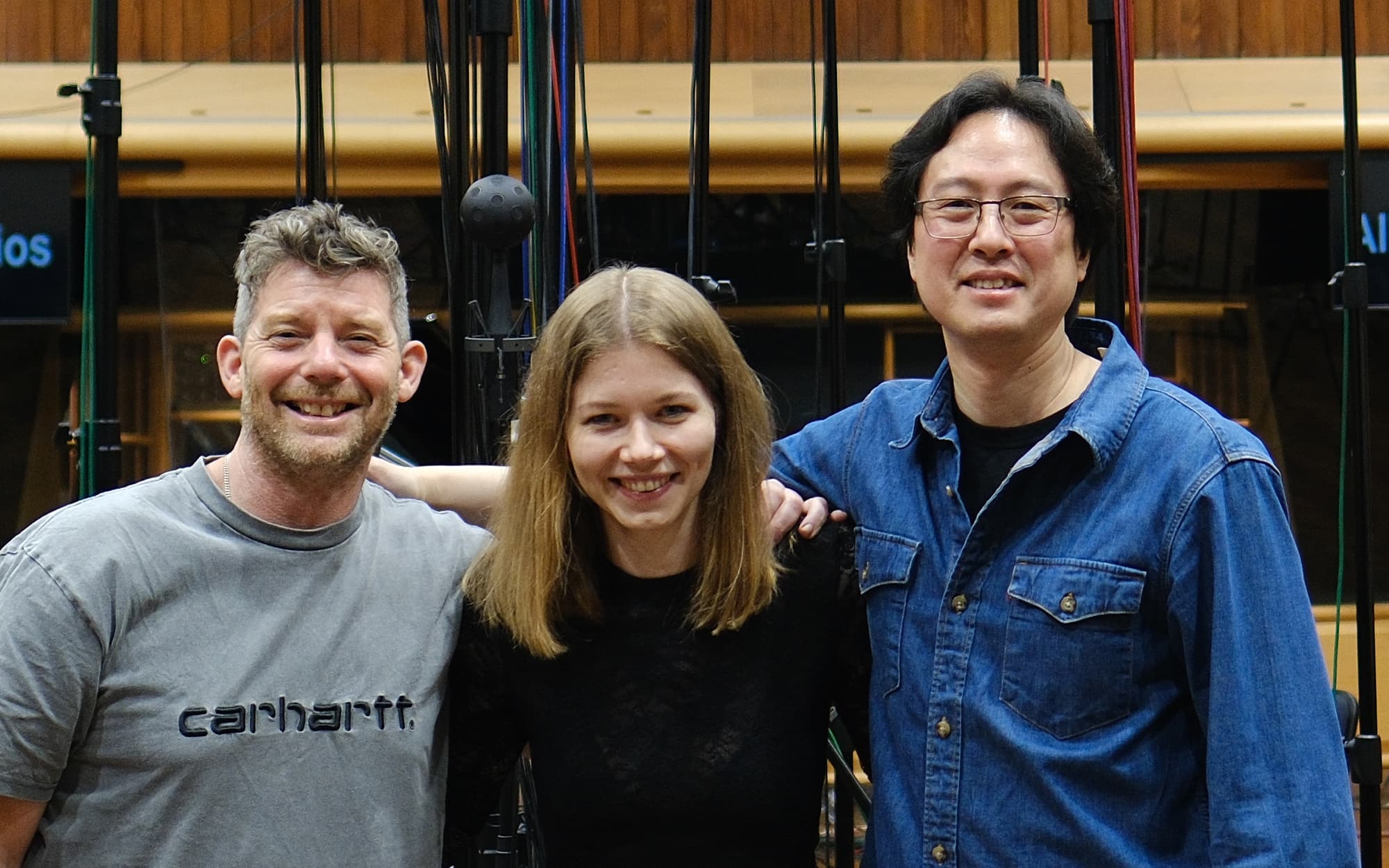

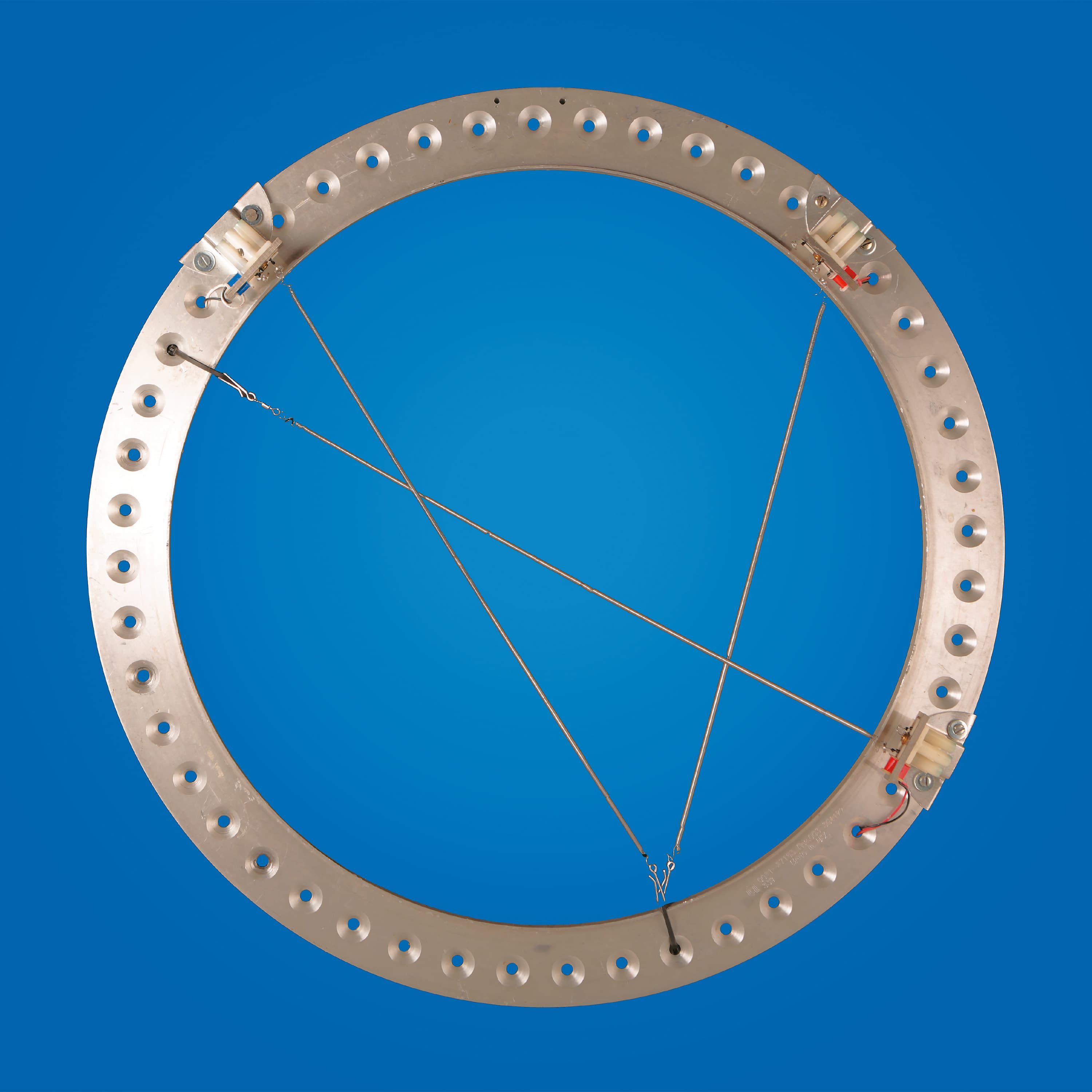

Umanesimo Artificiale works with exponential technologies to explore the evolving relationship between humanity and artificial intelligence. With a mission to investigate what it means to be human in the age of AI, it fosters computational and creative thinking through digital and performing arts, promoting a deeper connection between technology, art, and society.

Filippo Rosati www.umanesimoartificiale.xyz

Daniele Fabris www.danielefabris.com